While everyone is busy testing the new GPT-5.2 or letting Gemini 3 plan their holiday schedules, a much scarier reality is forming behind the scenes. We are currently watching the largest infrastructure gamble in human history, and the bill is finally coming due.

Tech giants like Microsoft, Google, and Amazon didn't just spend money this year. They poured over $405 billion into capital expenditures in 2025 alone. To put that in perspective, that is more than the GDP of entire nations, all spent on chips and concrete in a single year.

The question nobody wants to answer is simple. How do we pay for it?

The Inference Trap

Most people think the cost of AI comes from "training" the models. That is the phase where the AI learns. It is expensive but you only do it once. The real silent killer is "inference."

Inference is what happens when you actually use the AI. Every time you ask a chatbot a question, a massive server farm has to wake up. It processes your text and generates an answer. This costs electricity and water every single time.

New data from late 2025 shows a shocking gap. Training a frontier model might cost $190 million. But running that model for a year costs over $2 billion. This is not a software business anymore. It is a utility business. It is like running a power plant where the customers expect the electricity to be free.

The Density Wall

This energy demand has hit a physical limit. For twenty years, data centers used air conditioning to keep servers cool. Fans blew cold air over the chips. That era is over.

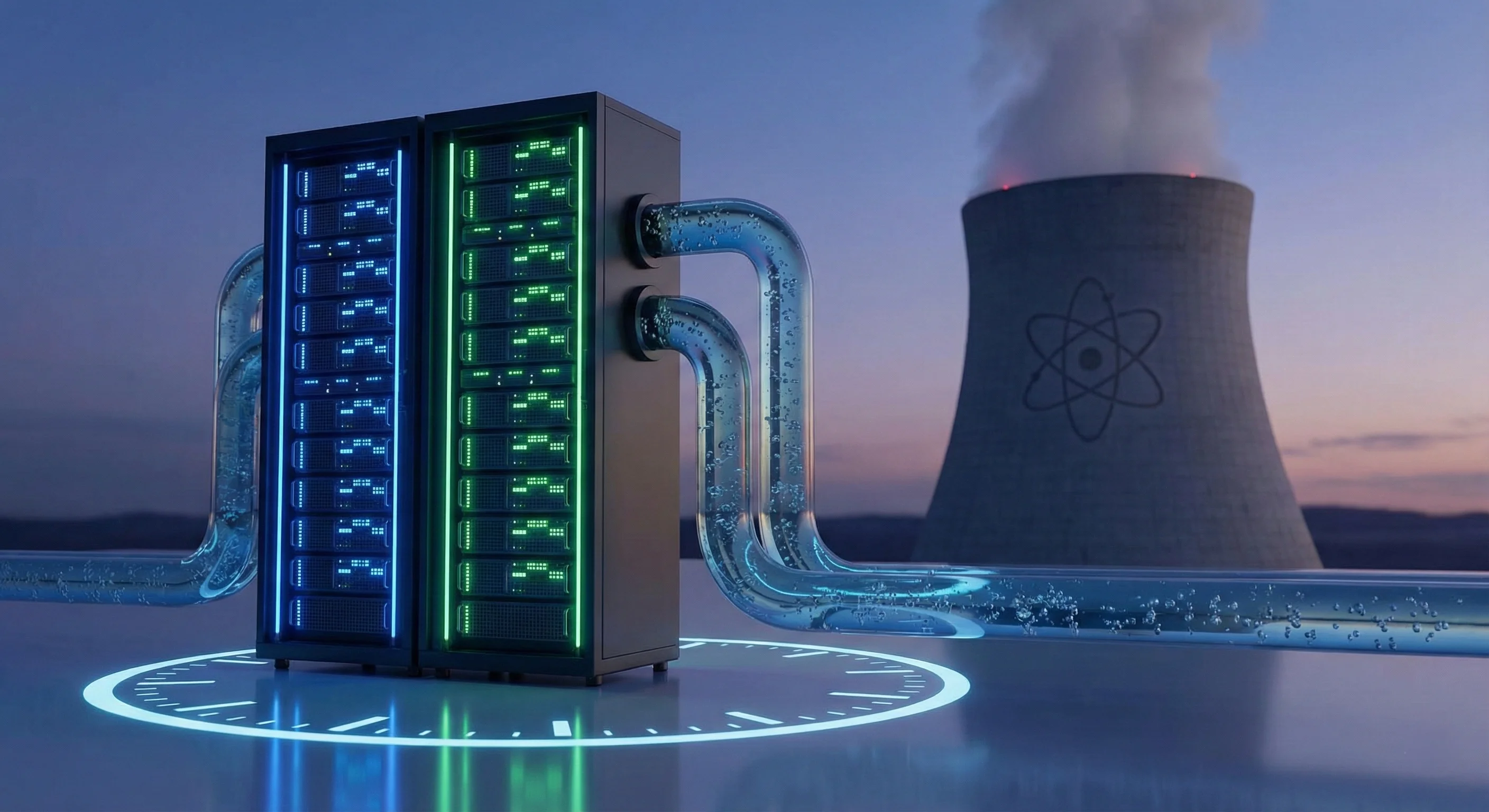

The new NVIDIA Blackwell Ultra chips run so hot they would melt in a normal room. You cannot cool them with air. You need liquid.

This is why companies are not just upgrading old data centers. They are abandoning them. They are building massive new facilities designed for liquid cooling. These buildings have pipes full of fluid running directly over the silicon.

This construction is a nightmare. Old data center floors cannot even support the weight of these new racks. A single NVL72 liquid cooled rack weighs over 3,000 pounds. So companies have to pour new concrete and install industrial plumbing. Billions of dollars are evaporating into construction costs before a single dollar of profit is made.

The Nuclear Option

The energy grid cannot handle this. A single new AI data center uses as much power as a medium sized city. The power companies are saying "no" to new connection requests.

So Big Tech is going off the grid.

Microsoft signed a deal to restart the Three Mile Island nuclear plant. Google is buying power from small modular reactors that do not even exist yet. They are becoming energy companies because they have no choice.

The Profit Question

This brings us to the final problem. All this spending is based on a guess. The tech giants are betting that AI will generate trillions in revenue. But right now, most AI revenue is just circular. Startups use venture capital money to pay for cloud credits.

If the "killer app" does not arrive soon, we are in trouble. We are building nuclear plants to power chatbots. The infrastructure is real but the business model is still theoretical.

This is not just a tech bubble. It is a physical one. And when the bills for the liquid cooling and the nuclear power finally come due, we will find out if the AI revolution can actually pay for itself.