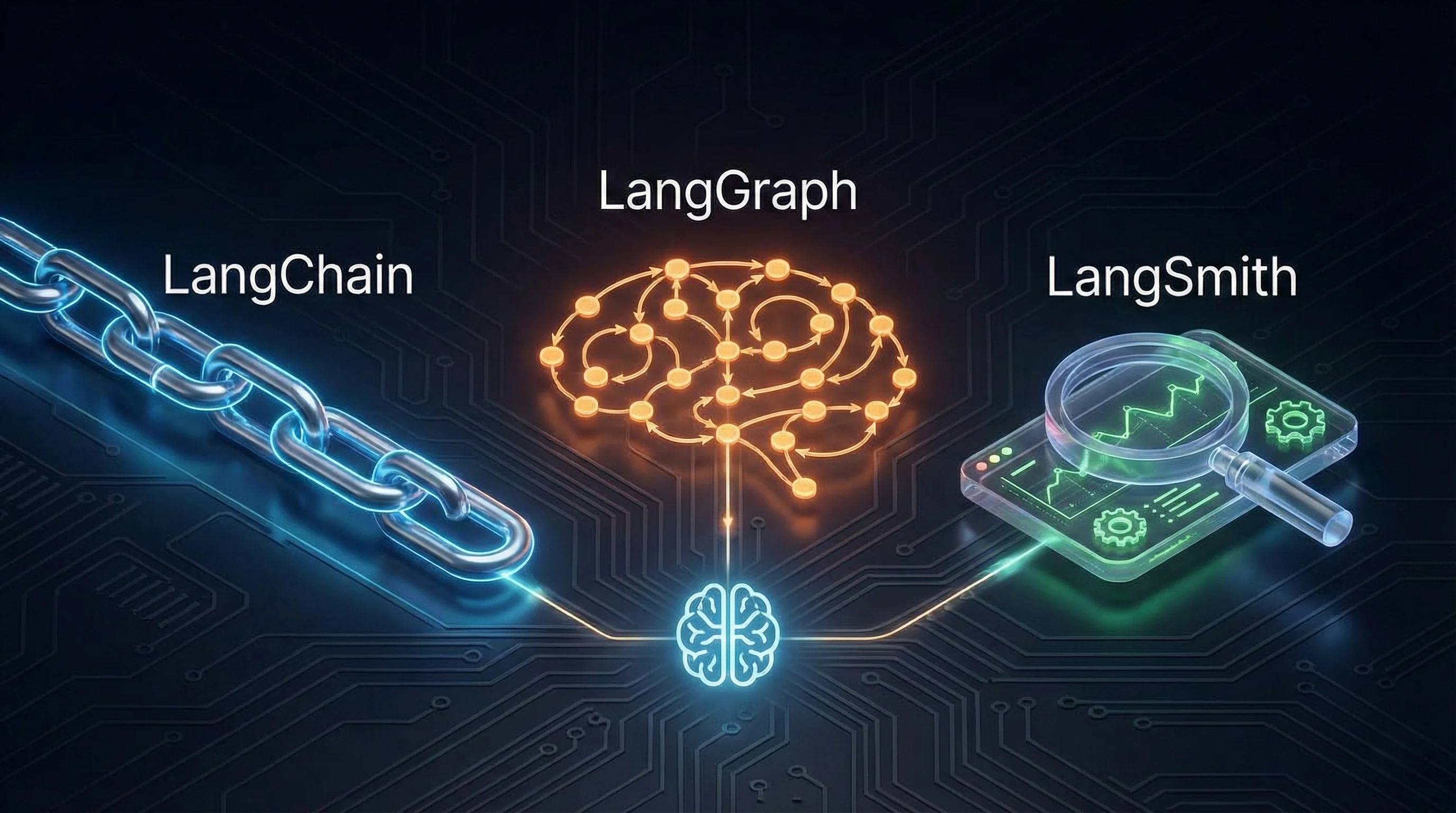

LangChain, LangGraph, and LangSmith:

The world of Large Language Models (LLMs) is rapidly evolving, bringing with it a suite of tools designed to help developers build amazing AI-powered applications. Among these, LangChain, LangGraph, and LangSmith stand out, but understanding their individual roles and how they work together can be a challenge. So, what's the real difference between them, and when should you use each one?

Let's break it down in simple terms.

Also Read: Top 10 Agentic AI Tools Every AI Engineer Must Learn

What is LangChain?

Think of LangChain as your all-in-one toolkit for building applications powered by LLMs like GPT, Claude, or Gemini. It's designed to simplify the process of connecting LLMs to various data sources, structuring prompts, and managing memory, tools, and agents. LangChain excels at creating straightforward, linear workflows where the LLM performs predefined tasks.

Key Features of LangChain:

- Modularity: Chains, tools, prompts, and memory are modularized for flexibility.

- Ease of Use: Ideal for developers building LLM apps with minimal agentic complexity.

- Tool Integration: Easily connects with APIs, vector stores, databases, and more.

Use Cases:

- Chatbots

- Document Question Answering

- SQL query generation

- Retrieval Augmented Generation (RAG) pipelines

LangChain is your go-to tool for building simple, linear LLM applications quickly and efficiently.

Example: A tool that reads your code changes and automatically writes a polite, professional git commit message.

The Workflow is Linear: Read Diff → Summarize Changes → Format Message

Example Code:

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

# 1. Initialize the Model

model = ChatOpenAI(model="gpt-4o")

# 2. Create the linear instructions (The Prompt)

prompt = ChatPromptTemplate.from_template(

"You are a helpful dev assistant. Read this code diff: {diff}. "

"Write a concise, professional commit message for it."

)

# 3. Build the Chain

commit_chain = prompt | model | StrOutputParser()

# 4. Run it

diff_text = "Fix: Updated API endpoint from /v1 to /v2 in users.py"

result = commit_chain.invoke({"diff": diff_text})

print(result)

# Output: "refactor: update user API endpoint to v2 version"

What is LangGraph?

LangGraph is a more advanced framework built on top of LangChain, designed for orchestrating complex, multi-step workflows that involve autonomous decision-making and iterative processes. It introduces directed graphs to model multi-agent conversations, state management, and loops, making it ideal for building sophisticated AI agents.

Key Features of LangGraph:

- State Machines: Constructs workflows as cyclic graphs, enabling loops and persistence.

- Asynchronous Agent Support: Enables multiple agents to collaborate or loop.

- Advanced Control Flow: Perfect for situations where you need feedback loops, branching logic, and retries.

Use Cases:

- AI agents

- Autonomous systems

- Iterative planning

- Complex tools orchestration

Example: Let's build an agent that plans a weekend trip. But there's a catch: it checks the weather. If it’s raining, it must scrap the outdoor plan and find indoor activities instead.

Example Code:

from langgraph.graph import StateGraph, START, END

from typing import TypedDict, List

# 1. Define the "State" (The memory passed between steps)

class AgentState(TypedDict):

city: str

plan: str

weather: str

# 2. Define the Nodes (The Workers)

def planner_node(state):

# Logic to generate a plan based on city

return {"plan": f"Visit the park in {state['city']}"}

def weather_checker_node(state):

# Logic to check weather (Simulated rain here)

return {"weather": "rainy"}

def replan_node(state):

# Logic to change plan to indoor activities

return {"plan": f"Visit the Museum in {state['city']} instead"}

# 3. Define the Logic (The Decision)

def should_replan(state):

if state["weather"] == "rainy":

return "replan"

return "end"

# 4. Build the Graph

workflow = StateGraph(AgentState)

workflow.add_node("planner", planner_node)

workflow.add_node("check_weather", weather_checker_node)

workflow.add_node("fix_plan", replan_node)

workflow.add_edge(START, "planner")

workflow.add_edge("planner", "check_weather")

# The Conditional Edge (The "Boss" Logic)

workflow.add_conditional_edges(

"check_weather",

should_replan,

{

"replan": "fix_plan",

"end": END

}

)

workflow.add_edge("fix_plan", END)

app = workflow.compile()

What is LangSmith?

LangSmith is the debugging and monitoring platform for LLM applications. While LangChain and LangGraph help you build apps, LangSmith helps you understand and improve them in production. It's like having a set of DevTools specifically for your LLM pipelines.

Key Features of LangSmith:

- Tracing and Debugging: Visualize how prompts, models, and chains interact.

- Evaluation: Use human or automated grading to test model performance.

- Prompt Experiments: Run A/B tests to optimize system prompts.

Use Cases:

- Teams deploying apps at scale who need transparency and version control.

Example: If your chatbot sometimes gives outdated answers, you can use LangSmith to trace the prompt that led to the incorrect output, view real-time logs, and fix the prompt with confidence.

LangChain vs. LangGraph vs. LangSmith: A Detailed Comparison

To further clarify the differences, here's a detailed comparison:

| Feature | LangChain | LangGraph | LangSmith |

|---|---|---|---|

| Purpose | Build linear LLM applications | Handle complex, stateful workflows | Debug, monitor, and evaluate LLM apps |

| Ideal For | Simple chatbots, Q&A tools | Autonomous agents, iterative workflows | Production-grade observability |

| Control Flow | Sequential | Graph-based, branching & looping | Not applicable (used for monitoring) |

| Workflow Complexity | Basic | Advanced | Not Applicable |

| Agent Support | Basic agent capabilities | Full agent support with state management | Full support for agent monitoring |

| Integration | Python, JS, APIs, vector DBs | Built on LangChain, supports same | LangChain & LangGraph compatible |

| Deployment Focus | Prototypes, MVPs | Production agents | Production debugging & evaluation |

| Visualization | via Langsmith | via Langsmith | Full tracing and logs |

| Evaluation Tools | None | None | Prompt tests, metrics, user grading |

When to Use Which?

Choosing between LangChain, LangGraph, and LangSmith depends on your project's complexity and needs:

- Use LangChain if:

- You're creating a simple chatbot or summarizing a document.

- You prefer simplicity and fast prototyping.

- Your use case doesn’t require advanced memory or loops.

- Use LangGraph if:

- You need to create multi-agent, multi-step, or self-correcting workflows.

- Your LLM app requires state management and branching logic.

- You’re building production-grade agents or autonomous systems.

- Use LangSmith if:

- You're ready to move to production and need visibility into your app.

- You want to evaluate different prompts or models.

- You're managing LLM behavior across teams or projects.

Real-World Stack Example

Let’s say you’re building a loan application assistant:

- Use LangChain to connect GPT-4o with loan document PDFs and an API.

- Use LangGraph to model the interaction: prequalify → ask missing info → risk check → approval flow.

- Use LangSmith to monitor what users asked, trace responses, and debug any hallucinations.

My Personal Take:

When I first started using this stack, the hardest part wasn't the code it was the mindset shift.

I spent too long trying to force LangChain to do complex things. I created massive, messy chains with if/else statements trying to handle errors. It was a nightmare to debug.

The moment I switched to LangGraph, it clicked. Understanding "State" the idea that my agent has a memory file that gets passed around between functions made building complex agents feel like standard software engineering again.

If you are just starting, here is my advice: Use LangChain for the components (the prompt templates, the model connections), but use LangGraph to control the flow. And turn on LangSmith on Day 1. You will thank yourself later when your agent hallucinates, and you actually know why.

Final Thoughts

LangChain, LangGraph, and LangSmith are complementary tools that form a powerful ecosystem for building and deploying LLM applications. By understanding their individual strengths and how they fit together, you can save time, improve reliability, and scale your AI projects effectively. Whether you're just starting or scaling to enterprise-grade agents, mastering these tools will give you a significant edge in the world of AI development.